Don't Go Full NPC

The case for thinking through it yourself

In December 2017, DeepMind’s AlphaZero played a 100-game match against Stockfish, the strongest traditional chess engine ever built. AlphaZero won 28 games, drew 72, and lost zero. Stockfish evaluated 70 million positions per second through brute-force calculation. AlphaZero evaluated just 80,000, having taught itself chess from scratch in four hours. It played by sacrificing pawns, knights, entire pieces for positional advantages so abstract that Stockfish didn’t register the danger until it was too late.

What's remarkable isn't just that AlphaZero won. It's that it found genuinely novel strategies in a game humans have studied for over a thousand years. Chess was supposed to be largely explored territory. AlphaZero looked at the same board everyone else had been staring at and saw moves no one had considered because they were so unintuitive. It didn't out compute Stockfish, it fundamentally saw the game differently.

Magnus Carlsen told Lex Fridman in 2022 that watching AlphaZero’s games was “a great joy” and that he spent 2019 trying to incorporate that style into his own play. He wouldn’t copy specific moves, but instead he tried to absorb a new way of seeing positions. Which makes the next thing he said in that interview interesting:

“I try not to use engines too much on my own because I know that when I play you obviously cannot have help from engines and I feel like often having imperfect knowledge about a position or some engine knowledge can be a lot worse than having no knowledge.”

The best chess player alive, rated number one in the world for over a decade, deliberately limits his use of the strongest analytical tool available. He studied AlphaZero to expand how he thinks then put the engine away so the thinking would remain his. Even partial reliance on a superhuman tool degrades your own judgment in ways that are hard to detect until you’re across from a grandmaster with no help coming and a position you only half-understand.

At this point, just about all of us use our own version of AlphaZero — ChatGPT, Claude, or Gemini on a daily basis and I’m as AI-pilled as anyone. I use Claude and Claude Code every day. These tools are genuinely transformative. But I’ve started noticing something in myself and in people around me: a creeping dependency that feels like productivity but might be atrophy.

The risk isn't that AI makes us immediately dumber. It's that we stop developing judgment: the taste, the intuition, the ability to know what "good" looks like before you can fully articulate why. Carlsen's edge isn't calculation; he'll tell you himself he's "really bad at solving exercises." His edge is evaluation, a felt sense of a position that was built over years of sitting with difficult problems and working them out.

A recent study out of the University of Hong Kong tracked 61 students generating creative ideas over 30 days. The group using ChatGPT outperformed on every metric for the first five days. More ideas, higher scores, better output. Then the researchers took ChatGPT away. Every creativity gain vanished overnight, back to baseline. But surprisingly while using ChatGPT, the students’ ideas became increasingly identical to each other. They had the same structure, same framing, same ideas with slight variation. And when ChatGPT was removed, that homogenization didn’t reverse. Thirty days later, their creative range was still narrow. It’s one small study, and I’d bet it fails to replicate like most psych research, but anecdotally, a lot of the AI slop I read and see converges. This is probably worth keeping in mind.

I review around a thousand pitch decks a year. The ones written with heavy LLM assistance all sound the same, correct, defensible, and completely devoid of any original insight about their market. The founders who stand out are the ones who've clearly sat with their problem long enough to develop a point of view that no model would generate. The AI-written decks are full of conventional wisdom. The good ones are windows into something the founder sees that nobody else does yet. They're surprising.

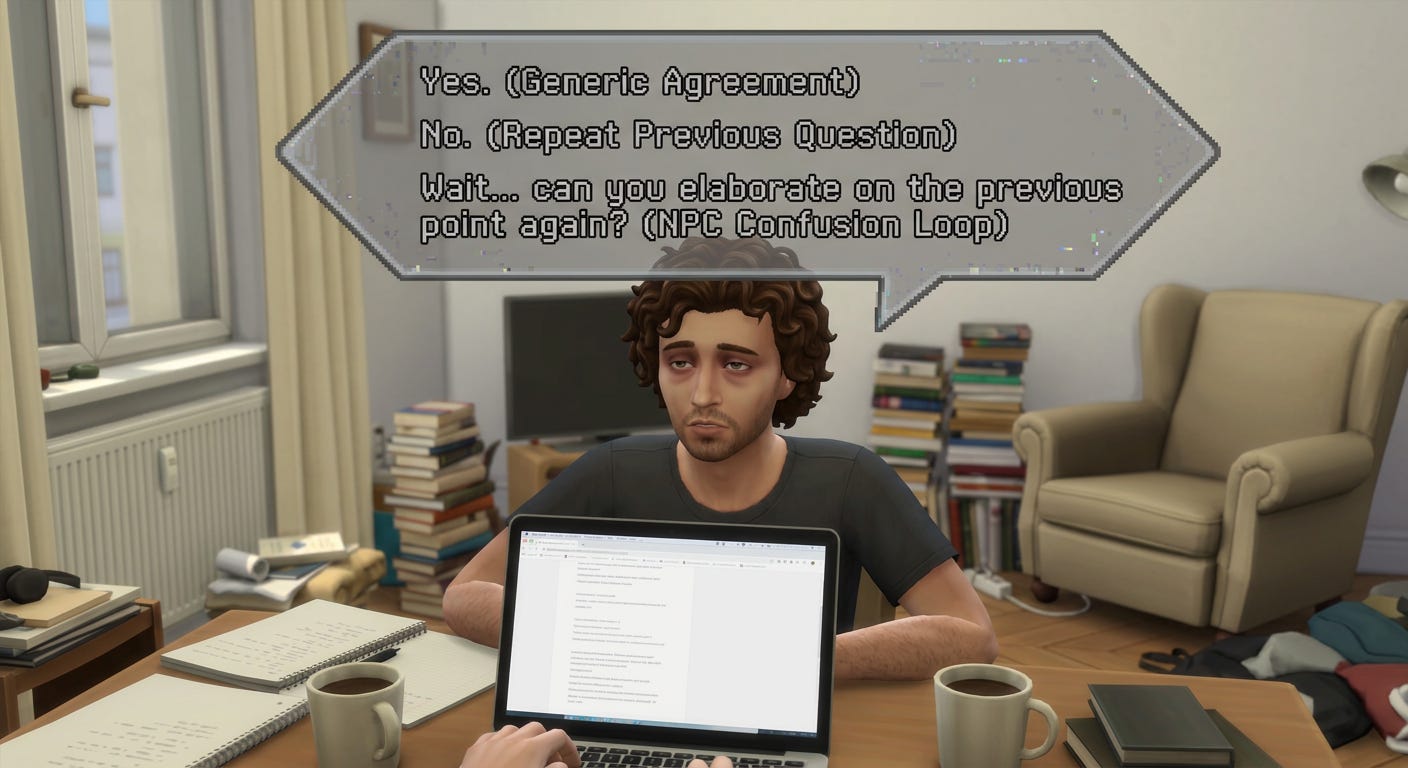

We all know the person who responds to everything in that polished, hedged, ChatGPT cadence. Every email, every Slack, every doc, all with the same structure, same qualifying phrases, same bloodless precision. That person has outsourced their writing and their thinking. They've gone full NPC.

My parents had to print MapQuest directions before road trips. Their generation developed real spatial awareness. They could read topography, orient by landmarks, and read a map. Google Maps is pure upside for most purposes. But a kid who grows up with GPS narration from age five just doesn’t know where they are.

The question is whether you’re using AI in a way that makes you sharper, or in a way that substitutes for sharpness you’d otherwise be building. Those paths look identical in the short term, and in fact the latter is much easier.

I remember being a software engineering intern at Amazon a decade ago and not knowing how to ask good questions. I’d walk up to my senior engineer with a half-formed problem and he’d ask: did you try X? Did you check Y? Did you look at Z? If I hadn’t exhausted my options, he simply wouldn’t answer the question. So I learned. I started showing up with a list of everything I’d already tried before asking for help. And as you’d expect, I started to become more self-sufficient. I felt proud when I solved problems on my own, and when I couldn’t, when my questions actually stumped even him, that felt even better. I’d earned the right to ask.

AI short-circuits that entire process if you let it. Instead of sitting with the problem, trying approaches, building the muscle of figuring things out, you paste the error message into Claude and get an answer in four seconds. The answer is usually right. But you’ve skipped the part where you become the kind of person who can solve the next problem without help. If you’re not going to try yourself at least read through the fix so you build on your understanding. I find myself too frequently using Claude Code to fix a bug and skipping that part and going to the next thing now that the error is gone.

Carlsen got it right. He used AlphaZero not as a crutch but as a lens, a way to re-examine a game he’d been studying his whole life with fresh eyes. Then he sat down at the board alone and played his own chess. When I reach for the prompt box I do my best to ask myself: am I using this the way Carlsen uses AlphaZero, to see something I couldn’t see before? Or am I using it to avoid the discomfort of figuring something out myself? He knows the answer every time he sits down at the board. We should probably know it too.